20 avrilWorkshops Machine Learning Kickstart by  : :Introducing concepts, techniques and tools to integrate ML into your applications le 20 avrilContent:This course provides a pragmatic and hands-on introduction to ML in Python. It adopts the mindset of a developer looking to integrate ML into a real-world application. The approach is top-down and driven by experimentation and results. We begin with some basic concepts, example use cases of ML, and how to formalize an ML problem. We’ll go over the intuitions behind some of the most widely used ML techniques in the industry (nearest neighbors, linear models, decision trees, random forests), their high-level functioning and their main parameters. This will be put in practice with the creation of your first predictive models using the open source Python library scikit-learn. In the second part of the course, we’ll look at methods and metrics to evaluate the performance of models. You’ll experiment with different model and evaluation parameters and we’ll interpret results. We’ll then discuss and demonstrate how to deploy your model of choice in production. We’ll see how to use cloud ML platforms for this and how to create our own predictive APIs to expose models. We’ll finish the day by considering some of the limitations of ML, and by learning how to inspect and how to prepare data to learn from (using the Pandas Python library). Throughout the course we’ll use classical ML datasets (from UCI) and a real-world and messy dataset scraped from the web. Syllabus:Introduction to ML- Key ML concepts and terminology

- Possibilities and example use cases in apps and business

- How to formalize your own ML problem

Model creation- Learning techniques: nearest neighbors, linear models, decision trees

- Boosting predictions’ accuracy with ensembles: application to decision trees with random forests

- Introduction to Jupyter notebooks and recap of Python basics

- Creating predictive models with scikit-learn

Evaluation- Performance of ML models: criteria, evaluation method, metrics and baselines

- Evaluation and cross validation of learning techniques with scikit-learn

- Efficient comparison from the command line with SKLL

- ML-as-a-Service in the cloud with BigML (Python wrappers, command-line tool and web dashboard)

Operationalization- Functioning of REST APIs and importance in the context of real-world ML

- Demo of cloud APIs: BigML and Indico

- Deploying your own Python models as APIs with Flask or with Microsoft Azure ML

Data preparation- Limitations of ML

- Feature engineering

- Finding issues in data and fixing them in Pandas

Student requirements:You need to bring your own laptop for hands-on practical work (command line and Python notebooks). Some basic maths knowledge (calculus, linear algebra, statistics) will be useful to understand some of the theory behind model creation, but it isn’t a hard requirement. To learn even more about ML, see our other training: “Improving ML workflows by PAPIs” Tools and libraries:- Open source: Jupyter, Scikit-learn, Pandas, SKLL, Flask

- Commercial: BigML, Dataiku, Microsoft Azure ML, Indico

Extras:At the end of the training, you will get an electronic copy of the book Bootstrapping Machine Learning as well as Jupyter notebooks including Python code | | | 21 avrilWorkshop Improving ML workflows by  : :Improving your usage of ML with the hottest techniquesContent:This fast-paced course shows you how to go further in Machine Learning by improving your workflows and tackling new problems — where the input data may not be tabular (e.g. text and images) or where example outputs may not be given (i.e. “unsupervised learning”). We’ll be using open source Python libraries as well as cloud APIs. We’ll start with a quick recap of ML model creation and evaluation, and we’ll go into the subtleties of performance metrics for classification. We’ll experiment with thresholding soft classifiers to find optimal tradeoffs between competing metrics. You’ll learn how to extend this to text classification by pre-processing text, extracting numerical features from it, and selecting the best ones. We’ll get familiar with the popular Xgboost library (which is behind most winning solutions on the latest Kaggle challenges) in an attempt to further improve the accuracy of our predictions. This will be complemented by the use of Hyperopt, a library to perform intelligent parameter tuning which we’ll use to find the best configurations for Xgboost. The last part of the course will cover Unsupervised Learning with clustering and anomaly detection techniques (to be used on their own or to augment existing supervised learning pipelines), and Deep Learning. For the latter, we’ll start by introducing the perceptron algorithm, which will lead to neural networks. You’ll get to use deep neural network models with the Keras and TensorFlow libraries. You’ll learn how to make the most of Deep Learning in cases where you don’t have sufficient data, by integrating feature extraction and transfer learning in your workflows. This will be illustrated on image data. We assume that you are a developer who has spent some time looking at ML. You’re familiar with basic modeling techniques such as decision trees and linear models, and with basic evaluation methods. This training is a follow-up to the “ML Kickstart” training where these notions are covered. Syllabus:Improving classifiers- Recap of model creation with Logistic Regression

- Recap of evaluation methods: simple split and cross validation

- Performance metrics for classification, and trade-offs

- Soft-classifier threshold tuning

Feature extraction and selection for text- Text pre-processing tips

- Feature extraction: bag of words and n-grams

- Feature selection techniques

- Creating pipelines in scikit-learn and tuning them via grid search

Improving models with boosting and parameter tuning- Recap of decision trees and ensembles

- Gradient boosting and Xgboost

- “ML for ML”: intelligent parameter tuning with Hyperopt

- Comparison with classical methods

Unsupervised learning- Clustering (k-means)

- Anomaly detection (isolation forests)

- Visualizations on BigML

Deep Learning- Perceptron algorithm (single- & multi-layered)

- Neural network training and predictions with Keras and TensorFlow

- Feature extraction for images with the Indico API

- Transfer Learning

Student requirements:You need to bring your own laptop for hands-on practical work (command line and Python notebooks). Prior knowledge of ML is required — see above and see our other training: “ML Kickstart by PAPIs”. Tools and libraries:- Open source: Jupyter, Scikit-learn, NLTK, Xgboost, Hyperopt, Keras, Tensorflow

- Commercial: BigML, Dataiku, Indico

Extras:At the end of the training, you will get an electronic copy of the book Bootstrapping Machine Learning as well as Jupyter notebooks including Python code |

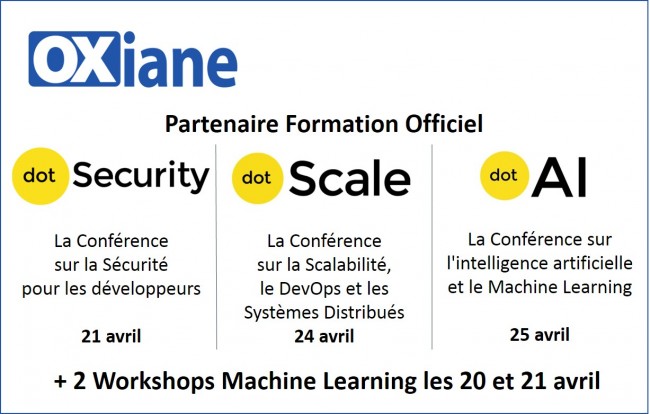

est partenaire formation

est partenaire formation &

&  &

&

le 25 avril

le 25 avril 2 Workshops Machine Learning les 20 et 21 avril

2 Workshops Machine Learning les 20 et 21 avril

Tarifs :

Tarifs :